AI-generated — show prompt

A dark atmospheric digital illustration of layered translucent manuscript pages fanning out from left to right, each showing different writing formats: a Slack message, an email, a blog post. From the pages, specific words and phrases lift off and float upward, some highlighted in electric blue, connecting with thin luminous threads to form a condensed ruleset on a terminal screen on the right side. Color palette: deep charcoal (#101218), electric blue (#468CDC) for highlighted patterns and connections, warm off-white for the manuscript text. Technical illustration style, slightly stylized, 16:9 landscape, dark background suitable for text overlay.

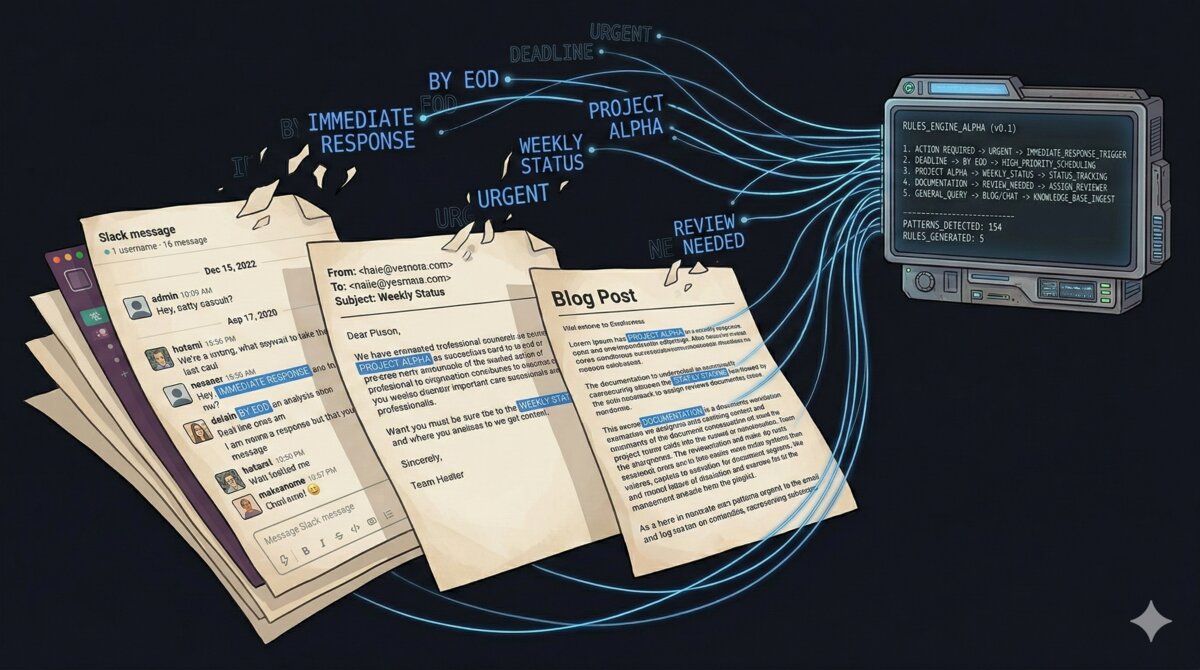

Claude Code has a plugin system that lets you define reusable skills as markdown files. A voice skill teaches Claude your writing patterns so its output reads like you wrote it, not like a chatbot did. I built one (510 lines, open source), but most of the effort went into the extraction process: running Claude over 15+ of my own writing samples across 6 content types to find patterns I didn’t know I had, then iterating on the results until the output consistently sounded like me. This post covers that process.

The problem with default AI output

All LLM output has a detectable signature. Kobak et al. (2025) and Liang et al. (2024) measured this: specific words are statistically overrepresented in AI-generated text. “Delve” appears 25x more frequently than in human writing. “Utilize” where any human would write “use.” “Leverage” as a verb for everything. Em dashes as the default clarification punctuation. Paragraphs that all land at the same length.

These patterns are consistent across models and prompts because they’re baked into the training distribution. A generic “write in my style” prompt doesn’t fix them: Claude will still reach for “it’s important to note” and “navigate the complexities” unless you explicitly tell it not to. A voice skill is that explicit instruction, loaded automatically every time Claude writes for you.

The hard part is extracting the right rules from your actual writing.

Collecting the corpus

Before you can extract voice patterns, you need writing samples. The extraction process I built (a ~950-line template that runs as a series of Claude Code prompts) has specific rules for corpus preparation:

Minimum bar: 10+ documents across 2+ content types. “Content type” means the format: blog posts, Slack messages, emails, docs, forum comments. You need the diversity because a Slack message and a blog post use different registers, but the underlying voice is the same person. If you only sample one format, you capture the format’s conventions (short paragraphs, emoji, no greeting) rather than the actual voice underneath.

The directory structure matters:

corpus/

├── blog/

│ ├── building-data-platform.md

│ └── running-with-power.md

├── slack/

│ └── migration-announcement.md

├── email/

│ └── client-quarterly-update.md

└── reddit/

└── cycling-forum-replies.mdThree rules that look obvious but aren’t:

-

Authenticity matters. AI-generated content in the corpus poisons the extraction. If you used Claude to help write a blog post, don’t include it. The whole point is capturing YOUR patterns, not Claude’s patterns reflected back.

-

Recency preference. Writing styles change. Samples from the last 2 years are worth more than stuff you wrote 5 years ago.

-

Length variety. Voice manifests differently in a 3-sentence Slack message vs. a 2,000-word blog post. Include both.

For my own skill, I started with 15+ samples across blog posts, Slack messages, client emails, and internal docs. I later added Reddit comments and Teams/Slack chat messages during calibration (more on that in Pass 3).

Pass 1: what Claude sees in your writing

The first pass is fully automated: 2 prompts that Claude Code runs sequentially. You paste them and let Claude work.

Corpus analysis

The first prompt tells Claude to read every file in the corpus and analyze your writing across 8 dimensions:

- Sentence patterns: average length, variation, parenthetical asides, list handling

- Opening patterns: how you start each format (blog vs. Slack vs. email)

- Vocabulary fingerprint: recurring words, technical term handling, code-switching

- Structural patterns: information ordering, use of headers, bullets, visual aids

- Tone markers: formality per format, humor, directness, handling disagreement

- Formatting habits: bold vs. caps vs. italics, punctuation quirks, emoji usage

- Language-specific patterns: code-switching, greeting conventions, formality differences

- LLM-ism detection: flagging any patterns that look like AI-generated text in the corpus

Claude writes a voice profile with everything it found across all your samples.

In my case, Claude found patterns I was completely unaware of: French-influenced punctuation habits (putting a space before ! and ?), a tendency to use ALL CAPS for selective emphasis instead of bold, “ahah” instead of “haha” (French spelling). These are exactly the patterns you’d never think to include if you wrote the skill from assumptions alone, because you don’t notice your own tics.

The same prompt also handles platform filtering: separating your genuine voice patterns from platform conventions. A short opening line in a Slack message isn’t your voice. It’s Slack’s convention. The pattern of always putting a “Quick q:” prefix before a question in chat, though: that’s you. Each pattern gets classified as VOICE (genuine), PLATFORM (format convention), or BORDERLINE.

Initial SKILL.md generation

The second prompt takes the analysis and produces a draft SKILL.md. It follows a skeleton structure: ban lists first (position matters: earlier constraints are more effective), then anti-performative rules, then core voice patterns, then format-specific modes.

The draft is never right on the first try. It’s a starting point.

Pass 2: the human review

This is where you read the draft SKILL.md and tell Claude what it got wrong. The feedback uses 4 categories:

- WRONG: “This pattern isn’t mine. Remove it.”

- OVERSTATED: “I do this occasionally, not constantly.”

- MISSING: “I always do X and it’s not in here.”

- NEEDS_NUANCE: “This is right for blog posts but wrong for Slack.”

Concrete example from my own skill: the initial extraction said I use “hyphens with spaces for interjections - like this.” Wrong. I actually use colons for explanations: like this. Same commit (Feb 9) also caught that the draft was missing an entire pattern: I write affirmatively, not through rhetorical question setups. “We realized we were burning budget” instead of “We took a step back and asked ourselves: what are we actually getting?” That absence turned into a whole new SKILL.md section with wrong/right examples.

You can also add new corpus samples at this stage if you realize there are gaps. I didn’t need to in Pass 2, but I added samples in Pass 3 when I discovered missing format coverage.

Claude takes your feedback, re-analyzes where needed, and produces a revised SKILL.md. The revision went from 333 to 404 lines: 71 lines of new rules that the automated extraction missed because they needed a human to spot them.

Pass 3: calibration

Claude generates calibration samples across all the formats you defined: a blog post opening, a Slack announcement, a client email, a forum comment. You read each one and mark it up:

- GOOD: sounds like you wrote it

- CLOSE: almost right, a few tweaks needed

- OFF: doesn’t sound like you at all

Each issue gets a specific tag: TOO_FORMAL, TOO_CASUAL, WRONG_WORD, LLM_ISM, NOT_ME. The tags map directly to SKILL.md sections, which makes fixing fast:

- TOO_FORMAL / TOO_CASUAL: adjust format-specific modes

- WRONG_WORD: add to ban list or fix vocabulary patterns

- LLM_ISM: expand the ban list

- NOT_ME: revise core voice patterns

For my skill, the calibration round on Feb 10 was the biggest single change. I’d added Reddit comment samples and Teams/Slack chat messages to the corpus, and the re-analysis found patterns that simply don’t appear in blog posts or client emails: stretched words for emphasis (“niiiice”), rapid-fire short messages, life context as availability explanation. It also produced 2 new format modes (Interactive/Conversational for Reddit and forums, Chat/DMs for Slack and WhatsApp) with distinct registers. The skill went from 404 to 469 lines.

The final iteration (Feb 15) added inclusive language rules: terminology replacements (ban list instead of blacklist, allow list instead of whitelist), gender-neutral defaults, and inclusivity guidelines. That brought it to 510 lines.

The git history shows the full arc:

| Date | Lines | What changed |

|---|---|---|

| Feb 7 | 333 | Initial extraction from 15+ writing samples across 4 content types |

| Feb 9 | 404 | Pass 2 fixes: colon pattern (not hyphens), added affirmative-writing section with wrong/right examples, banned question-led setups, added voice modes |

| Feb 10 | 469 | Pass 3 calibration: added Reddit + Slack corpus, 5 new patterns, 2 new format modes |

| Feb 15 | 510 | Added inclusive language rules: terminology replacements, gender-neutral defaults |

What goes in a voice skill

The extraction process produces a SKILL.md with 5 sections, ordered by impact (Claude reads top-down).

Section 1: LLM-ism ban lists

This goes first because position matters. If Claude hits a banned word while generating, the earlier it encountered the ban rule, the less likely it is to produce the word at all. The Kobak and Liang research gives you a starting list. Organize by part of speech:

### Banned words

**Adjectives:** delve/delving, actionable, bespoke, captivating,

cutting-edge, game-changing, groundbreaking, holistic, impactful,

innovative, insightful, meticulous, multifaceted, nuanced, paramount,

pivotal, profound, remarkable, revolutionary, seamless, transformative,

unparalleled, vibrant

**Verbs:** amplify, bolster, champion, craft (as "craft a solution"),

delve, elevate, embark, empower, foster, harness, leverage, navigate

(metaphorical), pioneer, resonate, showcase, spearhead, supercharge,

transcend, underscore, unleash, unlock, unveil, utilize (just say "use")Individual words aren’t enough. LLMs also have characteristic phrase patterns: scene-setting openers (“In today’s fast-paced world…”), importance-flagging filler (“It’s important to note…”), structural gimmicks (compulsive triads, machine-gunned short sentences). Ban those too.

Then formatting rules. The em dash rule alone makes a visible difference. Em dashes are the #1 formatting tell of AI text because LLMs use them as a universal clarification tool. Replacing them with colons (or just rewriting the sentence) immediately makes output read more human.

Section 2: Anti-performative rules

When Claude imitates a writing style, it identifies your patterns and then exaggerates them. If you use parenthetical asides occasionally, Claude will use them every single sentence. If you opened one post with “So,” Claude will open every post with “So.” Natural habits become theatrical signature moves.

The fix is explicit: tell Claude not to manufacture catchphrases from one-time usage, not to announce the narrative, not to perform casualness. Without this section, the output sounds like a copywriter doing an impression rather than the actual person.

Section 3: Core voice patterns

Concrete rules about how you write: sentence structure, vocabulary fingerprint, opening patterns per format, structural habits. The extraction process discovers most of these automatically, but the human review catches the ones Claude missed. These should be prescriptive, not descriptive:

**Sentence structure:**

- Mix of long explanatory sentences and short punchy ones

- Parenthetical asides for both clarification and humor

- Colons for explanations: like this

**Vocabulary:**

- Technical terms used precisely, no dumbing down

- Real numbers always: "4 hours" not "a few hours"Section 4: Format-specific modes

Same voice, different registers. Without this section, Claude applies every rule uniformly. The result: output that sounds right for one format and wrong for all others.

Define a default mode (mine is “informational”: direct, no narrative arc) and explicit overrides. Blog posts can use narrative structure. Slack messages start with context. Client emails get one opening pleasantry max. Reddit comments open with a genuine reaction before substance.

The default mode matters. If you set “informational” as default, Claude writes everything direct and factual unless you ask for a blog post. If you set “narrative” as default, your Slack messages get story arcs.

Section 5: Adaptation rules

The last section tells Claude how to apply everything above: ask about the audience if not obvious, default to direct, maintain the same voice across languages, and (critically) do two separate review passes after writing. One for LLM-isms, one for performative writing. They’re different failure modes and a single pass tends to catch one category and miss the other.

Real-world output comparison

I gave Claude the same raw data from a Strava ride (stats, comments, weather data) and asked for a journal entry twice: once without the voice skill, once with it.

Without voice skill:

September 3, 2024 — Yeroskipou, Cyprus

“From Sea to Sky” (almost)

Attempted the big one today: riding from the coast up to the highest point in Cyprus. Started at 6:27 AM from Yeroskipou to beat the heat — didn’t help much, it was still 30°C with the sun absolutely cooking.

125 km with 2,133 meters of climbing, including a single monster climb of 1,358 meters. Got within 7 km of the summit before the legs completely shut down — couldn’t hold even easy power anymore. At 90 kg, gravity wins eventually.

The numbers tell the story: nearly 6 hours of moving time, averaging just 13.3 km/h on the climbs. Normalized power sat at 194W (2.1 W/kg) with an average HR of 154 BPM — heart rate hit 183 at the max. Burned through 3,277 kJ, roughly 20 beers’ worth of energy. TSS of 219 confirms what my legs already knew: this was a deep dig.

The descent should have been the reward, but 25-42 km/h headwinds made it genuinely sketchy — 69% of the ride was into a headwind, with the longest stretch lasting over 2 hours and 18 minutes straight. MyWindsock rated the weather impact at 7.6%, pushing the “feels like” elevation up to 2,351 meters.

Extra mental load from driving on the left — Cyprus follows the UK convention, so every roundabout and turn required full concentration on a rental Factor O2 I was still getting used to.

Toughest ride I’ve ever done. Massive elevation, relentless wind, scorching heat, wrong side of the road. Didn’t summit, but honestly? Still a perfect ride. Sometimes the ones that break you are the ones worth writing about.

With voice skill:

September 3, 2024 : Yeroskipou, Cyprus

Woke up at 6AM to try and summit Mount Olympus on a rental Factor O2. 125km, 2,133m of climbing. Didn’t make it : legs completely shut down 7km before the top. Couldn’t hold even easy power at that point.

The numbers tell the story. 5h55 moving time, 53% of it climbing, average climbing speed of 13.3 km/h. NP of 194W for an IF of 0.61 and 219 TSS. At 100kg that’s only 2.1 W/kg normalized : not enough to grind up a 1,358m single climb in 30°C heat. The myWindsock data was brutal too : 69% headwind (25 to 42.7 km/h), longest headwind stretch over 2 hours. Feels-like elevation was 2,351m with the weather factored in.

The descent was genuinely sketchy. Strong crosswinds on narrow mountain roads, and driving on the left means your instincts are wrong for every single corner. Had to stay laser focused the whole way down. Max speed hit 62.2 km/h which felt like a lot more than it usually does.

Heart rate averaged 154 BPM over nearly 6 hours with a 20min best of 174 and max at 183. 3,277 kJ burned. That’s roughly 20 beers worth of energy (the app’s estimate, not mine). HRSS of 374 and TRIMP of 625 : both the highest I’ve ever recorded.

Hardest ride I’ve done. The combination of heat, wind, elevation, left-side driving, and a bike I’d only ridden 130km on before made everything harder than pure numbers suggest. Weight is clearly the limiting factor for this kind of effort : at 100kg, anything above 8-10% gradient becomes a war of attrition that I’m going to lose.

Still picked up 69.32 new Wandrer kilometers and 25% of some Greek-named community I can’t pronounce.

Need to come back lighter.

The differences: no em dashes, colons for clarifications, more technical shorthand (NP, IF, TSS without explanation), parenthetical asides (“the app’s estimate, not mine”), the self-aware closing. The first version wraps up with a generic inspirational line (“sometimes the ones that break you are the ones worth writing about”). The second just says “need to come back lighter.” The first invents a title. The second starts talking.

Both versions still get flagged as AI-generated by detection tools. But the voice skill version scores 30-40% lower on certainty. Still AI, but less generically so. The ban lists strip out the statistical markers that detectors rely on, and the format-specific rules prevent the structural uniformity (equal paragraph lengths, triadic lists, setup-punchline pattern) that’s the other major detection signal.

The goal is sounding like yourself.

Installation

A Claude Code skill lives inside a plugin directory:

plugins/your-voice/

├── .claude-plugin/

│ └── plugin.json # plugin metadata

└── skills/

└── your-voice/

└── SKILL.md # the voice skill itselfThe plugin.json registers the plugin with a name, version, and description. The SKILL.md has YAML frontmatter (trigger conditions: when should Claude load this skill) and the skill body (the actual instructions). The description field in the frontmatter controls when Claude activates: be generous with triggers so it fires for any writing request.

Create the structure and test locally:

mkdir -p plugins/your-voice/{.claude-plugin,skills/your-voice}

# Write your plugin.json and SKILL.md, then:

/plugin marketplace add ./

/plugin install your-voice@your-marketplaceOr install the reference implementation to study the structure:

/plugin marketplace add sam-dumont/claude-skills

/plugin install sams-voice@sams-skillsRepository: github.com/sam-dumont/claude-skills

The sams-voice SKILL.md is 510 lines. Most of that (probably 80%) is ban lists and format-specific rules. The extraction template is also in the repo at plugins/sams-voice/templates/personal-voice-extraction-process.md: copy it to your machine, follow the prompts, and the 3-pass process described above runs end-to-end.